OpenFst Examples

OpenFst Examples

Reading the quick tour first is recommended. That includes a simple example of FST application using either the C++ template level or the shell-level operations. The advanced usage topic contains an implementation using the template-free intermediate scripting level as well.

The following data files are used in the examples below:

| File | Description | Source |

|---|---|---|

| wotw.txt | (normalized) text of H.G. Well's War of the Worlds | public domain |

| wotw.lm.gz | 5-gram language model for wotw.txt in OpenFst text format |

www.opengrm.org |

| wotw.syms | FST symbol table file for wotw.lm |

www.opengrm.org |

| ascii.syms | FST symbol table file for ASCII letters | Python |

| lexicon.txt.gz | letter-to-token FST for wotw.syms |

see first example below |

| lexicon_opt.txt.gz | optimized letter-to-token FST for wotw.syms |

see first example below |

| downcase.txt | ASCII letter-to-downcased letter FST | awk 'NR>1 { print 0,0,$1,tolower($1) } ; END { print 0 }' <ascii.syms >downcase.txt |

- For the most part, we illustrate with the shell-level commands for convenience. (On non-Posix systems, there may be issues with binary file I/O to standard input and output. If so, pass input and output files as program arguments instead.)

- The fstcompose operation is used often here. Typically, one or both of the input FSTs should be appropriately sorted before composition. In the examples below, however, we have only illustrated sorting where it is necessary, to keep the presentation shorter. The provided data files are pre-sorted for their intended use.

Tokenization

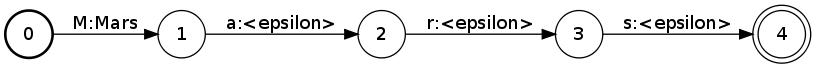

The first example converts a sequence of ASCII characters into a sequence of word tokens with punctuation and whitespace stripped. To do so we will need a lexicon transducer that maps from letters to their corresponding word tokens. A simple way to generate this is using the OpenFst text format. For example, the word Mars would have the form:$ fstcompile --isymbols=ascii.syms --osymbols=wotw.syms >Mars.fst <<EOF 0 1 M Mars 1 2 a <epsilon> 2 3 r <epsilon> 3 4 s <epsilon> 4 EOFThis can be drawn with:

$ fstdraw --isymbols=ascii.syms --osymbols=wotw.syms -portrait Mars.fst | dot -Tjpg >Mars.jpgwhich produces:

.

Suppose that

.

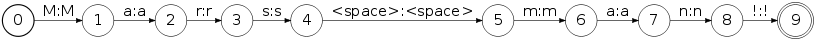

Suppose that Martian.fst and man.fst have similarly been created, then:

$ fstunion man.fst Mars.fst | fstunion - Martian.fst | fstclosure >lexicon.fstproduces a finite-state lexicon that transduces zero or more spelled-out word sequences into to their word tokens.

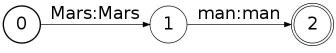

$ fstrmepsilon lexicon.fst | fstdeterminize | fstminimize >lexicon_opt.fstresulting in the compact:

$ fstunion man.fst Mars.fst | fstunion - Martian.fst | fstconcat - punct.fst | fstclosure >lexicon.fst

where:

$ fstcompile --isymbols=ascii.syms --osymbols=wotw.syms >punct.fst <<EOF 0 1 <space> <epsilon> 0 1 . <epsilon> 0 1 , <epsilon> 0 1 ? <epsilon> 0 1 ! <epsilon> 1 EOFis a transducer that deletes common punctuation symbols. The full punctuation transducer is here. Now, the tokenizaton of the an example string Mars man encoded as an FST:

can be done with:

can be done with:

$ fstcompose Marsman.fst lexicon_opt.fst | fstproject --project_output | fstrmepsilon >tokens.fstgiving:

.

To generate a full lexicon of all 7102 distinct words in the War of Worlds, it is convenient to dispense with the union

of individual word FSTs above and instead generate a single text FST from the word symbols in

.

To generate a full lexicon of all 7102 distinct words in the War of Worlds, it is convenient to dispense with the union

of individual word FSTs above and instead generate a single text FST from the word symbols in wotw.syms.

Here is a python script that does that and was used, along with the above steps,

to generate the full optimized lexicon.

Exercise 1

The above tokenization does not handle numeric character input. (a) Create a transducer that maps numbers in the range 0 - 999999 represented as digit strings to their English read form, e.g.:1 -> one 11 -> eleven 111 -> one hundred eleven 1111 -> one thousand one hundred eleven 11111 -> eleven thousand one hundred eleven.(b) Incorporate this transduction into the letter-to-token transduction above and apply to the input Mars is 4225 miles across. represented as letters.

Downcasing Text

The next example converts case-sensitive input to all lowercase output. To do the conversion, we create a flower transducer of the form:$ fstcompile --isymbols=ascii.syms --osymbols=ascii.syms >downcase.fst <<EOF 0 0 ! ! 0 0 A a 0 0 B b 0 0 a a 0 0 b b 0 EOFwhich produces:

A downcasing flower transducer for the full character set is here. This transducer can be applied to the Mars men automaton from the previous example with:

A downcasing flower transducer for the full character set is here. This transducer can be applied to the Mars men automaton from the previous example with:

$ fstproject Marsman.fst | fstcompose - downcase.fst | fstproject --project_output >marsman.fstgiving:

A transducer that downcases at the token level can be created with:

A transducer that downcases at the token level can be created with:

$ fstinvert lexicon_opt.fst | fstcompose - downcase.fst | fstcompose - lexicon_opt.fst | fstrmepsilon | fstdeterminize | fstminimize >downcase_token.fst

Exercise 2

(a) The letter-level downcasing transducer downcases any ASCII input. For which inputs does the token-level downcasing transducer work? What changes would be necessary to cover all inputs fromwotw.syms?

(b) If a token The were applied to downcase_token.fst, what would the output look like? What would it look like if the optimizations (epsilon-removal, determinization and minimization) were omitted from the construction of downcase_token.fst.

Case Restoration in Text

This example creates a transducer that attempts to restore the case of downcased input. This is the first non-trivial task and there is no error-free way to do this. The approach taken here will be to use case statistics gathered from the The War of the Worlds source text to help solve this. In particular, we will use an n-gram language model created on this text that is represented as a finite-state automaton in OpenFst format. Here is a typical path in this 5-gram automaton:$ fstrandgen --select=log_prob wotw.lm | fstprint --isymbols=wotw.syms --osymbols=wotw.syms 0 1 The The 1 2 desolating desolating 2 3 cry cry 3 4 <epsilon> <epsilon> 4 5 worked worked 5 6 <epsilon> <epsilon> 6 7 upon upon 7 8 my my 8 9 mind mind 9 10 <epsilon> <epsilon> 10 11 once once 11 12 <epsilon> <epsilon> 12 13 <epsilon> <epsilon> 13 14 I I 14 15 <epsilon> <epsilon> 15 16 <epsilon> <epsilon> 16 17 slept slept 17 18 <epsilon> <epsilon> 18 19 little little 19This model is constructed to have a transition for every 1-gram to 5-gram seen in 'War of the Worlds' with its weight related to the (negative log) probability of that n-gram occurring in the text corpus. The

$ fstcompose lexicon_opt.fst wotw.lm | fstarcsort --sort_type=ilabel >wotw.fst $ fstinvert downcase.fst | fstcompose - wotw.fst >case_restore.fstThe first FST,

wotw.fst, maps from letters to tokens following the probability distribution of the language model.

The second FST, case_restore.fst is similar but uses only downcased letters. Case prediction can then be performed with:

$ fstcompose marsman.fst case_restore.fst | fstshortestpath | fstproject --project_output | fstrmepsilon >prediction.fstwhich gives:

In other words, the most likely case of the input is determinized with respect to the n-gram model.

There is a serious problem, however, with the above solution. For all but tiny corpora,

the first composition will blow up with the classical composition algorithm since the

output labels in

In other words, the most likely case of the input is determinized with respect to the n-gram model.

There is a serious problem, however, with the above solution. For all but tiny corpora,

the first composition will blow up with the classical composition algorithm since the

output labels in lexicon_opt.fst have been pushed back when it was determinized and this greatly delays matching

with the labels in wotw.lm. There are three possible solutions:

First, we can use the input to restrict the composition chain as:

$ fstcompose downcase.fst marsman.fst | fstinvert | fstcompose - lexicon_opt.fst | fstcompose - wotw.lm | fstshortestpath | fstproject -project_output | fstrmepsilon >prediction.fstThis works fine but has the disadvantage that we don't have a single transducer to apply and we are depending on the input being a string or otherwise small. A second solution, which gives a single optimized transducer, is to replace transducer determinization and minimization of lexicon.fst with automata determinization and minimization (via encoding the input and output label pairs into a single new label) followed by the transducer determinization and minimization of the result of the composition with

wotw.fst:

$ fstencode --encode_labels lexicon.fst enc.dat | fstdeterminize | fstminimize | fstencode --decode - enc.dat >lexicon_compact.fst $ fstcompose lexicon_compact.fst wotw.lm | fstdeterminize | fstminimize >wotw.fst $ fstinvert downcase.fst | fstcompose - wotw.fst >case_restore.fstThis solution is a natural and simple one but has the disadvantage that the transducer determinization and minimization steps are quite expensive. A final solution is to use an FST representation that allows lookahead matching, which composition can exploit to avoid the matching delays:

$ fstconvert --fst_type=olabel_lookahead --save_relabel_opairs=relabel.pairs lexicon_opt.fst >lexicon_lookahead.fst $ fstrelabel --relabel_ipairs=relabel.pairs wotw.lm | fstarcsort --sort_type=ilabel >wotw_relabel.lm $ fstcompose lexicon_lookahead.fst wotw_relabel.lm >wotw.fst $ fstinvert downcase.fst | fstcompose - wotw.fst >case_restore.fstThe relabeling of the input labels of the language model is a by-product of how the lookahead matching works. Note in order to use the lookahead FST formats you must use

--enable-lookahead-fsts in the library compilation and you must set your

LD_LIBRARY_PATH (or equivalent) appropriately.

Exercise 3

(a) Find the weight of the second shortest distinct token sequence in the prediction example above. (b) Find the weight of the second shortest distinct token sequence in the prediction example above without using the--nshortest flag (hint: use fstdifference).

(c) Find all paths within weight 5 of the shortest path in prediction example.

Edit Distance

Spelling Correction

| I | Attachment | History | Action | Size | Date | Who | Comment |

|---|---|---|---|---|---|---|---|

| |

Mars.jpg | r1 | manage | 10.8 K | 2010-12-08 - 06:15 | MichaelRiley | |

| |

Marsman.png | r3 r2 r1 | manage | 13.0 K | 2010-12-09 - 01:52 | MichaelRiley | |

| |

ascii.syms | r2 r1 | manage | 0.5 K | 2010-12-08 - 06:06 | MichaelRiley | |

| |

downcase.jpg | r2 r1 | manage | 3.8 K | 2010-12-08 - 23:39 | MichaelRiley | |

| |

full_downcase.txt | r2 r1 | manage | 0.8 K | 2010-12-08 - 22:48 | MichaelRiley | |

| |

full_punct.txt | r1 | manage | 0.7 K | 2010-12-09 - 01:42 | MichaelRiley | |

| |

lexicon.jpg | r2 r1 | manage | 15.6 K | 2010-12-08 - 06:42 | MichaelRiley | |

| |

lexicon.png | r5 r4 r3 r2 r1 | manage | 18.4 K | 2010-12-08 - 07:06 | MichaelRiley | |

| |

lexicon.txt.gz | r1 | manage | 300.9 K | 2010-12-09 - 06:39 | MichaelRiley | |

| |

lexicon_opt.txt.gz | r2 r1 | manage | 226.2 K | 2010-12-09 - 02:27 | MichaelRiley | |

| |

lexiconmin.png | r1 | manage | 20.1 K | 2010-12-08 - 07:06 | MichaelRiley | |

| |

makelex.py.txt | r1 | manage | 0.4 K | 2010-12-08 - 08:48 | MichaelRiley | |

| |

marsman.png | r2 r1 | manage | 12.9 K | 2010-12-09 - 02:11 | MichaelRiley | |

| |

prediction.png | r1 | manage | 10.4 K | 2010-12-09 - 06:10 | MichaelRiley | |

| |

tokens.jpg | r1 | manage | 4.5 K | 2010-12-09 - 02:09 | MichaelRiley | |

| |

tokens.png | r2 r1 | manage | 14.4 K | 2010-12-08 - 07:47 | MichaelRiley | |

| |

wotw.lm.gz | r1 | manage | 3331.7 K | 2010-12-08 - 05:28 | MichaelRiley | |

| |

wotw.syms | r1 | manage | 88.8 K | 2010-12-08 - 05:13 | MichaelRiley | |

| |

wotw.txt | r1 | manage | 331.0 K | 2010-12-08 - 05:11 | MichaelRiley |

Topic revision: r6 - 2010-12-09 - MichaelRiley

Ideas, requests, problems regarding TWiki? Send feedback